What Are Patching Agents? (Architecture, Examples, and Future of Autonomous Code Repair)

Patching agents are a new class of AI systems designed to autonomously detect, diagnose, and repair bugs or security vulnerabilities in software code. Unlike traditional static analysis tools or simple code-completion assistants, these agents act like autonomous software engineers: they explore codebases, reason about root causes, generate candidate fixes, apply them, test the results, and iterate until the issue is resolved — all with minimal or no human intervention.

The rise of patching agents stems from the explosive growth of large language models (LLMs) and agentic AI. As codebases balloon in size and complexity, and as AI-powered vulnerability scanners uncover flaws faster than humans can fix them, patching agents promise to close the remediation gap. They represent a key step toward fully autonomous code repair, shifting software maintenance from a labor-intensive, reactive process to one that is proactive, scalable, and continuous.

Architecture of Patching Agents

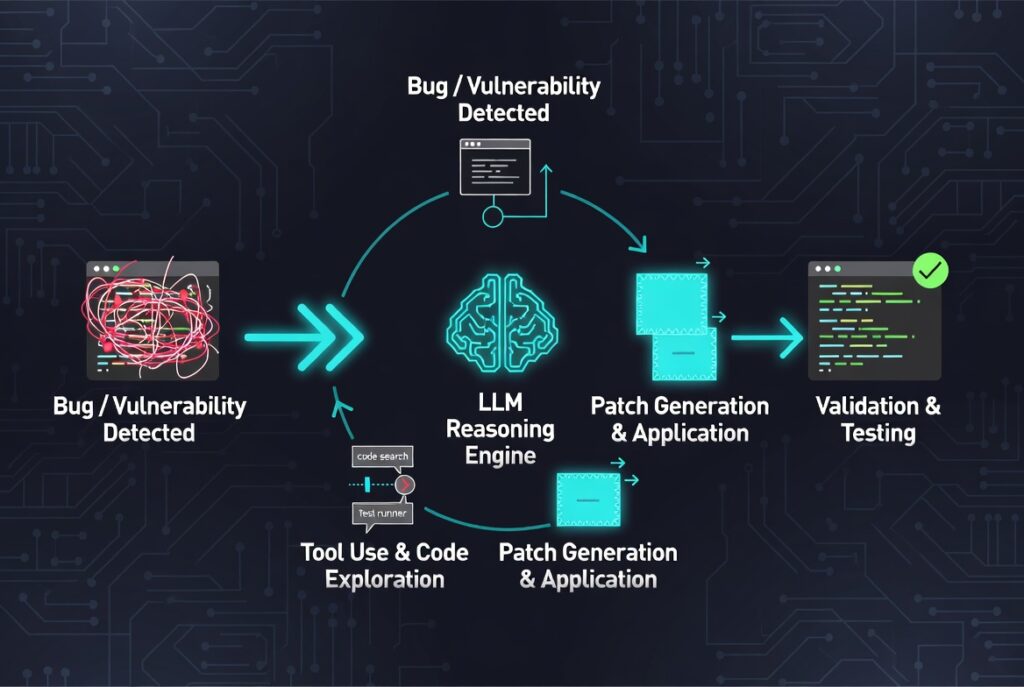

At their core, most patching agents follow an agentic architecture built around three main elements:

- LLM as the Reasoning Engine

A powerful LLM (such as GPT-4o, Claude Sonnet, or Gemini Deep Think models) serves as the “brain.” It receives a dynamic prompt that includes the bug report, failing tests, and accumulated context from previous actions. The model decides what to do next by outputting structured commands rather than raw code. - Tool-Use and Environment Interaction

Agents are equipped with a suite of specialized tools that let them interact with the real codebase and runtime environment. Common tools include:

- Code readers and search functions (to locate buggy files or similar patterns)

- Patch applicators (to edit source files)

- Test runners and compilers (to validate changes)

- Static/dynamic analyzers, fuzzers, or symbolic solvers (for deeper reasoning)

- Debuggers and reproducers (to recreate the failing scenario) These tools run inside a sandboxed environment (often Docker containers) to ensure safety and reproducibility.

- Planning and Feedback Loop

Unlike one-shot prompting or rigid scripts, patching agents use iterative planning mechanisms such as ReAct-style loops, finite state machines (FSM), or multi-agent orchestration. The agent typically cycles through phases like:

- Understand → Analyze the bug and failing tests

- Collect → Gather relevant code context and repair ingredients

- Generate & Apply → Propose and apply a patch

- Validate & Iterate → Run tests, analyze failures, and refine Advanced systems add validation layers (e.g., an “LLM judge” or differential testing) to catch regressions before a patch is accepted. Some agents are single-threaded for simplicity, while others use multi-agent collaboration where specialized sub-agents handle localization, patch generation, critique, or verification.

This architecture allows the agent to adapt dynamically — much like a human developer who reads code, experiments, and backtracks — rather than following a hardcoded workflow.

Real-World Examples

Several pioneering patching agents have demonstrated impressive results on public benchmarks and real open-source projects.

RepairAgent (University of Stuttgart & UC Davis) was one of the first fully autonomous LLM-based agents for program repair. It targets Java projects and uses a set of 14 purpose-built tools plus a dynamic prompt updated after every tool call. Guided by a finite state machine, it freely interleaves bug localization, code exploration, and fix validation. On the Defects4J benchmark (a standard suite of real Java bugs), RepairAgent correctly fixed 164 bugs — including 39 that previous state-of-the-art techniques could not resolve — at an average cost of roughly 14 cents per bug using GPT-3.5.

CodeMender (Google DeepMind) takes patching into the security domain. Built on Gemini Deep Think reasoning models, it combines LLM intelligence with traditional program analysis (static analysis, fuzz testing, symbolic solvers, and differential testing). CodeMender is both reactive — instantly patching newly discovered vulnerabilities — and proactive — rewriting existing code to eliminate entire classes of bugs (for example, by adding memory-safety annotations). In just six months of internal use, it upstreamed 72 security fixes to open-source projects, some spanning codebases as large as 4.5 million lines. All patches undergo human review before merging, but the agent handles the heavy lifting of diagnosis, patching, and self-correction.

Other notable systems include PatchPilot (a cost-efficient agent optimized for SWE-bench-style GitHub issues) and various multi-agent frameworks that split responsibilities across localization, reproduction, editing, and review agents. These examples show that patching agents are no longer theoretical — they are already shipping real fixes in production-grade code.

The Future of Autonomous Code Repair

The next wave of patching agents will likely integrate deeply into developer workflows. Imagine CI/CD pipelines that automatically spin up a patching agent whenever a test fails or a vulnerability scanner flags an issue, submitting a polished pull request with explanations, tests, and validation evidence.

We can expect several key advances:

- Multi-agent collaboration for handling ultra-complex, cross-repository bugs

- Better verification through hybrid neuro-symbolic methods and continuous fuzzing

- Cost and efficiency improvements as smaller, specialized models and smarter tool-calling reduce token usage

- Proactive self-healing where agents continuously scan and refactor codebases to prevent classes of bugs before they appear

- Enterprise-grade guardrails including human-in-the-loop approval for critical changes, audit logs, and policy-as-code controls

Challenges remain — hallucinations, over-editing, verification of semantic correctness, and the risk of introducing subtle regressions — but rapid progress in reasoning models and validation techniques is closing these gaps. Ethical considerations around autonomy, accountability, and security of the agents themselves will also shape adoption.

In the long term, patching agents point toward a future of self-evolving software: codebases that monitor their own health, detect issues in real time, and autonomously maintain and improve themselves. Developers will shift from fixing bugs to defining high-level goals, reviewing AI-generated solutions, and focusing on architecture and innovation.

Patching agents are more than just another AI tool — they are a foundational building block for autonomous software engineering. As they mature, they have the potential to dramatically accelerate development velocity while raising the baseline security and reliability of the software that powers our world.

Citations

RepairAgent: An Autonomous, LLM-Based Agent for Program Repair (arXiv)

RepairAgent GitHub Repository

Introducing CodeMender: an AI agent for code security (Google DeepMind)

Google DeepMind Develops AI Agent to Autonomously Repair Security Bugs

PatchPilot and Related Patching Agents Research

Autonomous Code-Security Agents Overview